Previously, (1, 2) I’ve chopped one picture into square sections to make a new image. This time, I want to take freeform sections from a larger image to make a smaller one. This is basically like taking scissors to a magazine, then putting together the pieces to make a new picture. Let’s call the magazine the “source” and the desired picture the “target.”

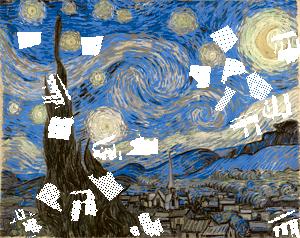

The first step to solving this is to break the target image along edges, creating a segmented image. Then for each section, I’ll find where it best matches the source. There are details, of course.

First, I need to match the color palettes between the source and target. Second, I need to ensure that regions aren’t re-used, by making the used regions extremely penalizing for the optimization.

How should it be segmented? My somewhat-lazy attempt is via clustering on the color and position of each pixel. This means Lloyd’s algorithm, which converges nicely. Then I filter the segments to remove any small-scale features, making them easier to cut by hand.

Now how to actually do this optimization? The easiest way is to do it greedily. I’ll work from the center outward, find the best match for each section, then mask it out of the source. The initial guess is to match the average color of the region using a large circular filter, then fine-tune with random search. This works pretty well!

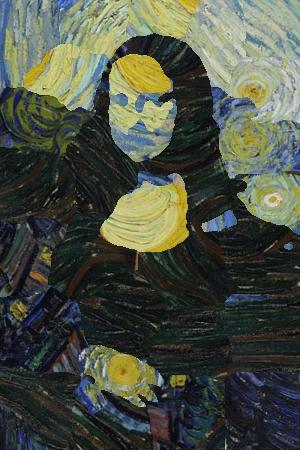

How does this look in practice? I’ll try making a temple out of Starry Night. The following images show the different stages of the process.

There are lots of directions for improvement, but this works pretty well! Better image segmentation could be useful. Changing the number of segments makes a big difference. The relative sizes of the source and target also matter.

Overall, the main strength of this method is that the segmentation respects image edges. This makes it much easier to forgive issues within each section. Of course, that means that the algorithm will be less effective in images with large smooth gradients.